前言

在上一篇理论文章中我们介绍了YUV到RGB之间转换的几种公式与一些优化算法,今天我们再来介绍一下RGB到YUV的转换,顺便使用Opengl ES做个实践,将一张RGB的图片通过Shader

的方式转换YUV格式图,然后保存到本地。

可能有的童鞋会问,YUV转RGB是为了渲染显示,那么RGB转YUV的应用场景是什么?在做视频编码的时候我们可以使用MediaCodec搭配Surface就可以完成,貌似也没有用到RGB转YUV的功能啊,

硬编码没有用到,那么软编码呢?一般我们做视频编码的时候都是硬编码优先,软编码兜底的原则,在遇到一些硬编码不可用的情况下可能就需要用到x264库进行软编码了,而此时RGB转YUV可能就派上用场啦。

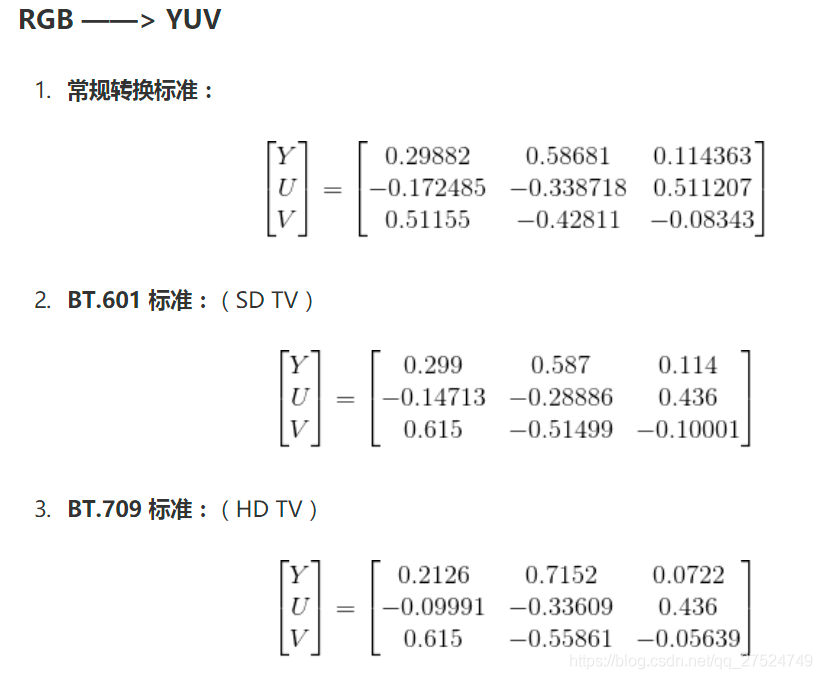

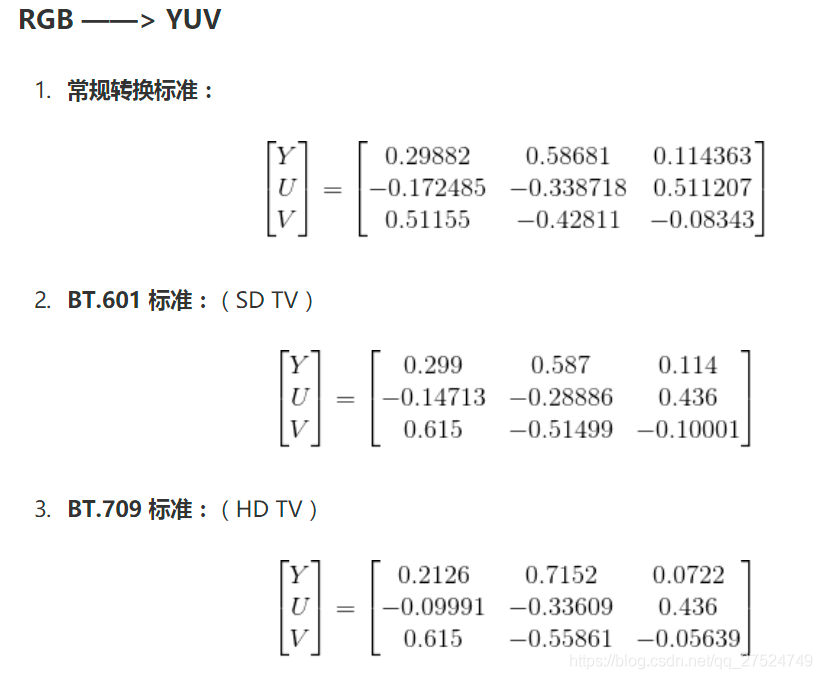

RGB到YUV的转换公式

在前面

Opengl ES之YUV数据渲染

一文中我们介绍过YUV的几种兼容标准,下面我们看看RGB到YUV的转换公式:

RGB 转 BT.601 YUV

Y = 0.257R + 0.504G + 0.098B + 16

Cb = -0.148R - 0.291G + 0.439B + 128

Cr = 0.439R - 0.368G - 0.071B + 128

RGB 转 BT.709 YUV

Y = 0.183R + 0.614G + 0.062B + 16

Cb = -0.101R - 0.339G + 0.439B + 128

Cr = 0.439R - 0.399G - 0.040B + 128

或者也可以使用矩阵运算的方式进行转换,更加的便捷:

RGB转YUV

先说一下RGB转YUV的过程,先将RGB数据按照公式转换为YUV数据,然后将YUV数据按照RGBA进行排布,这一步的目的是为了后续数据读取,最后使用

glReadPixels

读取YUV数据。

而对于OpenGL ES来说,目前它输入只认RGBA、lumiance、luminace alpha这几个格式,输出大多数实现只认RGBA格式,因此输出的数据格式虽然是YUV格式,但是在存储时我们仍然要按照RGBA方式去访问texture数据。

以NV21的YUV数据为例,它的内存大小为

width x height * 3 / 2

。如果是RGBA的格式存储的话,占用的内存空间大小是

width x height x 4

(因为 RGBA 一共4个通道)。很显然它们的内存大小是对不上的,

那么该如何调整Opengl buffer的大小让RGBA的输出能对应上YUV的输出呢?我们可以设计输出的宽为

width / 4

,高为

height * 3 / 2

即可。

为什么是这样的呢?虽然我们的目的是将RGB转换成YUV,但是我们的输入和输出时读取的类型GLenum是依然是RGBA,也就是说:width x height x 4 = (width / 4) x (height

3 / 2)

4

而YUV数据在内存中的分布以下这样子的:

width / 4

|--------------|

| |

| | h

| Y |

|--------------|

| U | V |

| | | h / 2

|--------------|

那么上面的排序如果进行了归一化之后呢,就变成了下面这样子了:

(0,0) width / 4 (1,0)

|--------------|

| |

| | h

| Y |

|--------------| (1,2/3)

| U | V |

| | | h / 2

|--------------|

(0,1) (1,1)

从上面的排布可以看出看出,在纹理坐标

y < (2/3)

时,需要完成一次对整个纹理的采样,用于生成Y数据,当纹理坐标

y > (2/3)

时,同样需要再进行一次对整个纹理的采样,用于生成UV的数据。

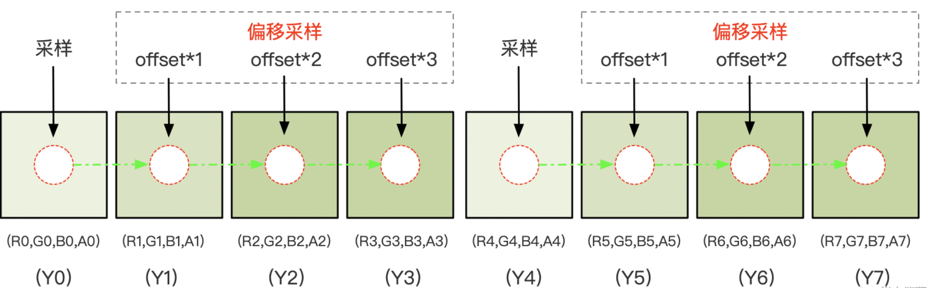

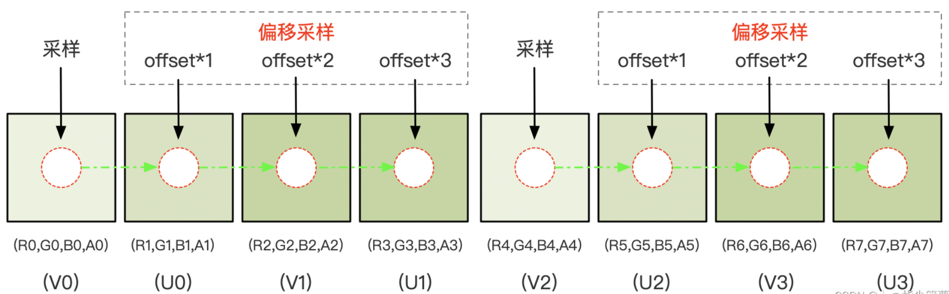

同时还需要将我们的视窗设置为

glViewport(0, 0, width / 4, height * 1.5);

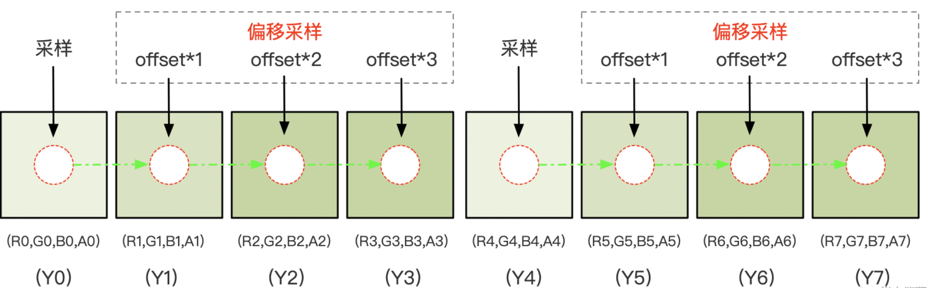

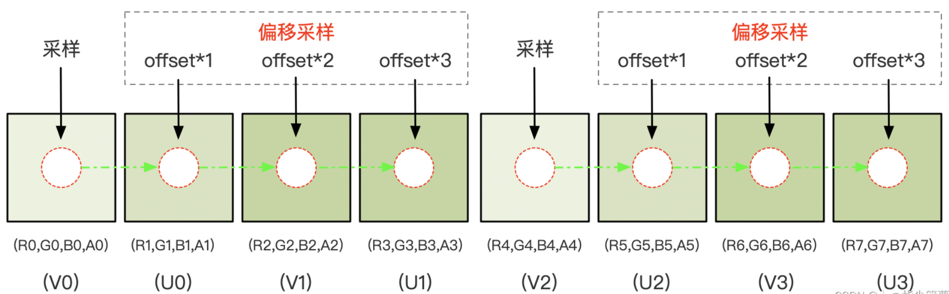

由于视口宽度设置为原来的 1/4 ,可以简单的认为相对于原来的图像每隔4个像素做一次采样,由于我们生成Y数据是要对每一个像素都进行采样,所以还需要进行3次偏移采样。

同理,生成对于UV数据也需要进行3次额外的偏移采样。

在着色器中offset变量需要设置为一个归一化之后的值:

1.0/width

, 按照原理图,在纹理坐标 y < (2/3) 范围,一次采样(加三次偏移采样)4 个 RGBA 像素(R,G,B,A)生成 1 个(Y0,Y1,Y2,Y3),整个范围采样结束时填充好

width*height

大小的缓冲区;

当纹理坐标 y > (2/3) 范围,一次采样(加三次偏移采样)4 个 RGBA 像素(R,G,B,A)生成 1 个(V0,U0,V0,U1),又因为 UV 缓冲区的高度为 height/2 ,VU plane 在垂直方向的采样是隔行进行,整个范围采样结束时填充好

width*height/2

大小的缓冲区。

主要代码

RGBtoYUVOpengl.cpp

#include "../utils/Log.h"

#include "RGBtoYUVOpengl.h"

// 顶点着色器

static const char *ver = "#version 300 es\n"

"in vec4 aPosition;\n"

"in vec2 aTexCoord;\n"

"out vec2 v_texCoord;\n"

"void main() {\n"

" v_texCoord = aTexCoord;\n"

" gl_Position = aPosition;\n"

// 片元着色器

static const char *fragment = "#version 300 es\n"

"precision mediump float;\n"

"in vec2 v_texCoord;\n"

"layout(location = 0) out vec4 outColor;\n"

"uniform sampler2D s_TextureMap;\n"

"uniform float u_Offset;\n"

"const vec3 COEF_Y = vec3(0.299, 0.587, 0.114);\n"

"const vec3 COEF_U = vec3(-0.147, -0.289, 0.436);\n"

"const vec3 COEF_V = vec3(0.615, -0.515, -0.100);\n"

"const float UV_DIVIDE_LINE = 2.0 / 3.0;\n"

"void main(){\n"

" vec2 texelOffset = vec2(u_Offset, 0.0);\n"

" if (v_texCoord. y <= UV_DIVIDE_LINE) {\n"

" vec2 texCoord = vec2(v_texCoord. x, v_texCoord. y * 3.0 / 2.0);\n"

" vec4 color0 = texture(s_TextureMap, texCoord);\n"

" vec4 color1 = texture(s_TextureMap, texCoord + texelOffset);\n"

" vec4 color2 = texture(s_TextureMap, texCoord + texelOffset * 2.0);\n"

" vec4 color3 = texture(s_TextureMap, texCoord + texelOffset * 3.0);\n"

" float y0 = dot(color0. rgb, COEF_Y);\n"

" float y1 = dot(color1. rgb, COEF_Y);\n"

" float y2 = dot(color2. rgb, COEF_Y);\n"

" float y3 = dot(color3. rgb, COEF_Y);\n"

" outColor = vec4(y0, y1, y2, y3);\n"

" } else {\n"

" vec2 texCoord = vec2(v_texCoord.x, (v_texCoord.y - UV_DIVIDE_LINE) * 3.0);\n"

" vec4 color0 = texture(s_TextureMap, texCoord);\n"

" vec4 color1 = texture(s_TextureMap, texCoord + texelOffset);\n"

" vec4 color2 = texture(s_TextureMap, texCoord + texelOffset * 2.0);\n"

" vec4 color3 = texture(s_TextureMap, texCoord + texelOffset * 3.0);\n"

" float v0 = dot(color0. rgb, COEF_V) + 0.5;\n"

" float u0 = dot(color1. rgb, COEF_U) + 0.5;\n"

" float v1 = dot(color2. rgb, COEF_V) + 0.5;\n"

" float u1 = dot(color3. rgb, COEF_U) + 0.5;\n"

" outColor = vec4(v0, u0, v1, u1);\n"

" }\n"

// 使用绘制两个三角形组成一个矩形的形式(三角形带)

// 第一第二第三个点组成一个三角形,第二第三第四个点组成一个三角形

const static GLfloat VERTICES[] = {

1.0f,-1.0f, // 右下

1.0f,1.0f, // 右上

-1.0f,-1.0f, // 左下

-1.0f,1.0f // 左上

// FBO贴图纹理坐标(参考手机屏幕坐标系统,原点在左下角)

// 注意坐标不要错乱

const static GLfloat TEXTURE_COORD[] = {

1.0f,0.0f, // 右下

1.0f,1.0f, // 右上

0.0f,0.0f, // 左下

0.0f,1.0f // 左上

RGBtoYUVOpengl::RGBtoYUVOpengl() {

initGlProgram(ver,fragment);

positionHandle = glGetAttribLocation(program,"aPosition");

textureHandle = glGetAttribLocation(program,"aTexCoord");

textureSampler = glGetUniformLocation(program,"s_TextureMap");

u_Offset = glGetUniformLocation(program,"u_Offset");

LOGD("program:%d",program);

LOGD("positionHandle:%d",positionHandle);

LOGD("textureHandle:%d",textureHandle);

LOGD("textureSample:%d",textureSampler);

LOGD("u_Offset:%d",u_Offset);

RGBtoYUVOpengl::~RGBtoYUVOpengl() noexcept {

void RGBtoYUVOpengl::fboPrepare() {

glGenTextures(1, &fboTextureId);

// 绑定纹理

glBindTexture(GL_TEXTURE_2D, fboTextureId);

// 为当前绑定的纹理对象设置环绕、过滤方式

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_WRAP_S, GL_CLAMP_TO_EDGE);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_WRAP_T, GL_CLAMP_TO_EDGE);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_LINEAR);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_LINEAR);

glBindTexture(GL_TEXTURE_2D, GL_NONE);

glGenFramebuffers(1,&fboId);

glBindFramebuffer(GL_FRAMEBUFFER,fboId);

// 绑定纹理

glBindTexture(GL_TEXTURE_2D,fboTextureId);

glFramebufferTexture2D(GL_FRAMEBUFFER, GL_COLOR_ATTACHMENT0, GL_TEXTURE_2D, fboTextureId, 0);

// 这个纹理是多大的?

glTexImage2D(GL_TEXTURE_2D, 0, GL_RGBA, imageWidth / 4, imageHeight * 1.5, 0, GL_RGBA, GL_UNSIGNED_BYTE, nullptr);

// 检查FBO状态

if (glCheckFramebufferStatus(GL_FRAMEBUFFER)!= GL_FRAMEBUFFER_COMPLETE) {

LOGE("FBOSample::CreateFrameBufferObj glCheckFramebufferStatus status != GL_FRAMEBUFFER_COMPLETE");

// 解绑

glBindTexture(GL_TEXTURE_2D, GL_NONE);

glBindFramebuffer(GL_FRAMEBUFFER, GL_NONE);

// 渲染逻辑

void RGBtoYUVOpengl::onDraw() {

// 绘制到FBO上去

// 绑定fbo

glBindFramebuffer(GL_FRAMEBUFFER, fboId);

glPixelStorei(GL_UNPACK_ALIGNMENT,1);

// 设置视口大小

glViewport(0, 0,imageWidth / 4, imageHeight * 1.5);

glClearColor(0.0f, 1.0f, 0.0f, 1.0f);

glClear(GL_COLOR_BUFFER_BIT);

glUseProgram(program);

// 激活纹理

glActiveTexture(GL_TEXTURE2);

glUniform1i(textureSampler, 2);

// 绑定纹理

glBindTexture(GL_TEXTURE_2D, textureId);

// 设置偏移

float texelOffset = (float) (1.f / (float) imageWidth);

glUniform1f(u_Offset,texelOffset);

* size 几个数字表示一个点,显示是两个数字表示一个点

* normalized 是否需要归一化,不用,这里已经归一化了

* stride 步长,连续顶点之间的间隔,如果顶点直接是连续的,也可填0

// 启用顶点数据

glEnableVertexAttribArray(positionHandle);

glVertexAttribPointer(positionHandle,2,GL_FLOAT,GL_FALSE,0,VERTICES);

// 纹理坐标

glEnableVertexAttribArray(textureHandle);

glVertexAttribPointer(textureHandle,2,GL_FLOAT,GL_FALSE,0,TEXTURE_COORD);

// 4个顶点绘制两个三角形组成矩形

glDrawArrays(GL_TRIANGLE_STRIP,0,4);

glUseProgram(0);

// 禁用顶点

glDisableVertexAttribArray(positionHandle);

if(nullptr != eglHelper){

eglHelper->swapBuffers();

glBindTexture(GL_TEXTURE_2D, 0);

// 解绑fbo

glBindFramebuffer(GL_FRAMEBUFFER, 0);

// 设置RGB图像数据

void RGBtoYUVOpengl::setPixel(void *data, int width, int height, int length) {

LOGD("texture setPixel");

imageWidth = width;

imageHeight = height;

// 准备fbo

fboPrepare();

glGenTextures(1, &textureId);

// 激活纹理,注意以下这个两句是搭配的,glActiveTexture激活的是那个纹理,就设置的sampler2D是那个

// 默认是0,如果不是0的话,需要在onDraw的时候重新激活一下?

// glActiveTexture(GL_TEXTURE0);

// glUniform1i(textureSampler, 0);

// 例如,一样的

glActiveTexture(GL_TEXTURE2);

glUniform1i(textureSampler, 2);

// 绑定纹理

glBindTexture(GL_TEXTURE_2D, textureId);

// 为当前绑定的纹理对象设置环绕、过滤方式

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_WRAP_S, GL_REPEAT);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_WRAP_T, GL_REPEAT);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_LINEAR);

glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_LINEAR);

glTexImage2D(GL_TEXTURE_2D, 0, GL_RGBA, width, height, 0, GL_RGBA, GL_UNSIGNED_BYTE, data);

// 生成mip贴图

glGenerateMipmap(GL_TEXTURE_2D);

// 解绑定

glBindTexture(GL_TEXTURE_2D, 0);

// 读取渲染后的YUV数据

void RGBtoYUVOpengl::readYUV(uint8_t **data, int *width, int *height) {

// 从fbo中读取

// 绑定fbo

*width = imageWidth;

*height = imageHeight;

glBindFramebuffer(GL_FRAMEBUFFER, fboId);

glBindTexture(GL_TEXTURE_2D, fboTextureId);

glFramebufferTexture2D(GL_FRAMEBUFFER, GL_COLOR_ATTACHMENT0,

GL_TEXTURE_2D, fboTextureId, 0);

*data = new uint8_t[imageWidth * imageHeight * 3 / 2];

glReadPixels(0, 0, imageWidth / 4, imageHeight * 1.5, GL_RGBA, GL_UNSIGNED_BYTE, *data);

glBindTexture(GL_TEXTURE_2D, 0);

// 解绑fbo

glBindFramebuffer(GL_FRAMEBUFFER, 0);

下面是Activity的主要代码逻辑:

public class RGBToYUVActivity extends AppCompatActivity {

protected MyGLSurfaceView myGLSurfaceView;

@Override

protected void onCreate(@Nullable Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

setContentView(R.layout.activity_rgb_to_yuv);

myGLSurfaceView = findViewById(R.id.my_gl_surface_view);

myGLSurfaceView.setOpenGlListener(new MyGLSurfaceView.OnOpenGlListener() {

@Override

public BaseOpengl onOpenglCreate() {

return new RGBtoYUVOpengl();

@Override

public Bitmap requestBitmap() {

BitmapFactory.Options options = new BitmapFactory.Options();

options.inScaled = false;

return BitmapFactory.decodeResource(getResources(),R.mipmap.ic_smile,options);

@Override

public void readPixelResult(byte[] bytes) {

if (null != bytes) {

// 也就是RGBtoYUVOpengl::readYUV读取到结果数据回调

@Override

public void readYUVResult(byte[] bytes) {

if (null != bytes) {

String fileName = System.currentTimeMillis() + ".yuv";

File fileParent = getFilesDir();

if (!fileParent.exists()) {

fileParent.mkdirs();

FileOutputStream fos = null;

try {

File file = new File(fileParent, fileName);

fos = new FileOutputStream(file);

fos.write(bytes,0,bytes.length);

fos.flush();

fos.close();

Toast.makeText(RGBToYUVActivity.this, "YUV图片保存成功" + file.getAbsolutePath(), Toast.LENGTH_LONG).show();

} catch (Exception e) {

Log.v("fly_learn_opengl", "图片保存异常:" + e.getMessage());

Toast.makeText(RGBToYUVActivity.this, "YUV图片保存失败", Toast.LENGTH_LONG).show();

Button button = findViewById(R.id.bt_rgb_to_yuv);

button.setOnClickListener(new View.OnClickListener() {

@Override

public void onClick(View view) {

myGLSurfaceView.readYuvData();

ImageView iv_rgb = findViewById(R.id.iv_rgb);

iv_rgb.setImageResource(R.mipmap.ic_smile);

}

以下是自定义SurfaceView的代码:

public class MyGLSurfaceView extends SurfaceView implements SurfaceHolder.Callback {

private final static int MSG_CREATE_GL = 101;

private final static int MSG_CHANGE_GL = 102;

private final static int MSG_DRAW_GL = 103;

private final static int MSG_DESTROY_GL = 104;

private final static int MSG_READ_PIXEL_GL = 105;

private final static int MSG_UPDATE_BITMAP_GL = 106;

private final static int MSG_UPDATE_YUV_GL = 107;

private final static int MSG_READ_YUV_GL = 108;

public BaseOpengl baseOpengl;

private OnOpenGlListener onOpenGlListener;

private HandlerThread handlerThread;

private Handler renderHandler;

public int surfaceWidth;

public int surfaceHeight;

public MyGLSurfaceView(Context context) {

this(context,null);

public MyGLSurfaceView(Context context, AttributeSet attrs) {

super(context, attrs);

getHolder().addCallback(this);

handlerThread = new HandlerThread("RenderHandlerThread");

handlerThread.start();

renderHandler = new Handler(handlerThread.getLooper()){

@Override

public void handleMessage(@NonNull Message msg) {

switch (msg.what){

case MSG_CREATE_GL:

baseOpengl = onOpenGlListener.onOpenglCreate();

Surface surface = (Surface) msg.obj;

if(null != baseOpengl){

baseOpengl.surfaceCreated(surface);

Bitmap bitmap = onOpenGlListener.requestBitmap();

if(null != bitmap){

baseOpengl.setBitmap(bitmap);

break;

case MSG_CHANGE_GL:

if(null != baseOpengl){

Size size = (Size) msg.obj;

baseOpengl.surfaceChanged(size.getWidth(),size.getHeight());

break;

case MSG_DRAW_GL:

if(null != baseOpengl){

baseOpengl.onGlDraw();

break;

case MSG_READ_PIXEL_GL:

if(null != baseOpengl){

byte[] bytes = baseOpengl.readPixel();

if(null != bytes && null != onOpenGlListener){

onOpenGlListener.readPixelResult(bytes);

break;

case MSG_READ_YUV_GL:

if(null != baseOpengl){

byte[] bytes = baseOpengl.readYUVResult();

if(null != bytes && null != onOpenGlListener){

onOpenGlListener.readYUVResult(bytes);

break;

case MSG_UPDATE_BITMAP_GL:

if(null != baseOpengl){

Bitmap bitmap = onOpenGlListener.requestBitmap();

if(null != bitmap){

baseOpengl.setBitmap(bitmap);

baseOpengl.onGlDraw();

break;

case MSG_UPDATE_YUV_GL:

if(null != baseOpengl){

YUVBean yuvBean = (YUVBean) msg.obj;

if(null != yuvBean){

baseOpengl.setYuvData(yuvBean.getyData(),yuvBean.getUvData(),yuvBean.getWidth(),yuvBean.getHeight());

baseOpengl.onGlDraw();

break;

case MSG_DESTROY_GL:

if(null != baseOpengl){

baseOpengl.surfaceDestroyed();

break;

public void setOpenGlListener(OnOpenGlListener listener) {

this.onOpenGlListener = listener;

@Override

public void surfaceCreated(@NonNull SurfaceHolder surfaceHolder) {

Message message = Message.obtain();

message.what = MSG_CREATE_GL;

message.obj = surfaceHolder.getSurface();

renderHandler.sendMessage(message);

@Override

public void surfaceChanged(@NonNull SurfaceHolder surfaceHolder, int i, int w, int h) {

Message message = Message.obtain();

message.what = MSG_CHANGE_GL;

message.obj = new Size(w,h);

renderHandler.sendMessage(message);

Message message1 = Message.obtain();

message1.what = MSG_DRAW_GL;

renderHandler.sendMessage(message1);

surfaceWidth = w;

surfaceHeight = h;

@Override

public void surfaceDestroyed(@NonNull SurfaceHolder surfaceHolder) {

Message message = Message.obtain();

message.what = MSG_DESTROY_GL;

renderHandler.sendMessage(message);

public void readGlPixel(){

Message message = Message.obtain();

message.what = MSG_READ_PIXEL_GL;

renderHandler.sendMessage(message);

public void readYuvData(){

Message message = Message.obtain();

message.what = MSG_READ_YUV_GL;

renderHandler.sendMessage(message);

public void updateBitmap(){

Message message = Message.obtain();

message.what = MSG_UPDATE_BITMAP_GL;

renderHandler.sendMessage(message);

public void setYuvData(byte[] yData,byte[] uvData,int width,int height){

Message message = Message.obtain();

message.what = MSG_UPDATE_YUV_GL;

message.obj = new YUVBean(yData,uvData,width,height);

renderHandler.sendMessage(message);

public void release(){

// todo 主要线程同步问题,当心surfaceDestroyed还没有执行到,但是就被release了,那就内存泄漏了

if(null != baseOpengl){

baseOpengl.release();

public void requestRender(){

Message message = Message.obtain();

message.what = MSG_DRAW_GL;

renderHandler.sendMessage(message);

public interface OnOpenGlListener{

BaseOpengl onOpenglCreate();

Bitmap requestBitmap();

void readPixelResult(byte[] bytes);

void readYUVResult(byte[] bytes);

}

BaseOpengl的java代码:

public class BaseOpengl {

public static final int YUV_DATA_TYPE_NV12 = 0;

public static final int YUV_DATA_TYPE_NV21 = 1;

// 三角形

public static final int DRAW_TYPE_TRIANGLE = 0;

// 四边形

public static final int DRAW_TYPE_RECT = 1;

// 纹理贴图

public static final int DRAW_TYPE_TEXTURE_MAP = 2;

// 矩阵变换

public static final int DRAW_TYPE_MATRIX_TRANSFORM = 3;

// VBO/VAO

public static final int DRAW_TYPE_VBO_VAO = 4;

// EBO

public static final int DRAW_TYPE_EBO_IBO = 5;

// FBO

public static final int DRAW_TYPE_FBO = 6;

// PBO

public static final int DRAW_TYPE_PBO = 7;

// YUV nv12与nv21渲染

public static final int DRAW_YUV_RENDER = 8;

// 将rgb图像转换城nv21

public static final int DRAW_RGB_TO_YUV = 9;

public long glNativePtr;

protected EGLHelper eglHelper;

protected int drawType;

public BaseOpengl(int drawType) {

this.drawType = drawType;

this.eglHelper = new EGLHelper();

public void surfaceCreated(Surface surface) {

Log.v("fly_learn_opengl","------------surfaceCreated:" + surface);

eglHelper.surfaceCreated(surface);

public void surfaceChanged(int width, int height) {

Log.v("fly_learn_opengl","------------surfaceChanged:" + Thread.currentThread());

eglHelper.surfaceChanged(width,height);

public void surfaceDestroyed() {

Log.v("fly_learn_opengl","------------surfaceDestroyed:" + Thread.currentThread());

eglHelper.surfaceDestroyed();

public void release(){

if(glNativePtr != 0){

n_free(glNativePtr,drawType);

glNativePtr = 0;

public void onGlDraw(){

Log.v("fly_learn_opengl","------------onDraw:" + Thread.currentThread());

if(glNativePtr == 0){

glNativePtr = n_gl_nativeInit(eglHelper.nativePtr,drawType);

if(glNativePtr != 0){

n_onGlDraw(glNativePtr,drawType);

public void setBitmap(Bitmap bitmap){

if(glNativePtr == 0){

glNativePtr = n_gl_nativeInit(eglHelper.nativePtr,drawType);

if(glNativePtr != 0){

n_setBitmap(glNativePtr,bitmap);

public void setYuvData(byte[] yData,byte[] uvData,int width,int height){

if(glNativePtr != 0){

n_setYuvData(glNativePtr,yData,uvData,width,height,drawType);

public void setMvpMatrix(float[] mvp){

if(glNativePtr == 0){

glNativePtr = n_gl_nativeInit(eglHelper.nativePtr,drawType);

if(glNativePtr != 0){

n_setMvpMatrix(glNativePtr,mvp);

public byte[] readPixel(){

if(glNativePtr != 0){

return n_readPixel(glNativePtr,drawType);

return null;

public byte[] readYUVResult(){

if(glNativePtr != 0){

return n_readYUV(glNativePtr,drawType);

return null;

// 绘制

private native void n_onGlDraw(long ptr,int drawType);

private native void n_setMvpMatrix(long ptr,float[] mvp);

private native void n_setBitmap(long ptr,Bitmap bitmap);

protected native long n_gl_nativeInit(long eglPtr,int drawType);

private native void n_free(long ptr,int drawType);

private native byte[] n_readPixel(long ptr,int drawType);

private native byte[] n_readYUV(long ptr,int drawType);

private native void n_setYuvData(long ptr,byte[] yData,byte[] uvData,int width,int height,int drawType);

}

将转换后的YUV数据读取保存好后,可以将数据拉取到电脑上使用

YUVViewer

这个软件查看是否真正转换成功。

参考

https://juejin.cn/post/7025223104569802789

专栏系列

Opengl ES之EGL环境搭建

Opengl ES之着色器

Opengl ES之三角形绘制

Opengl ES之四边形绘制

Opengl ES之纹理贴图

Opengl ES之VBO和VAO

Opengl ES之EBO

Opengl ES之FBO

Opengl ES之PBO

Opengl ES之YUV数据渲染

YUV转RGB的一些理论知识

关注我,一起进步,人生不止coding!!!