最近 flink 版本从 1.8 升级到 1.11,在输出数据到 hdfs 的时候,发现输出文件都是这样命名的:

.part-0-0.inprogress.aa4a310c-7b48-4dee-b153-2a4f21ef10b3

.part-0-0.inprogress.b7e69438-6573-46c9-ae02-fab11db802cf

.part-0-0.inprogress.bcbf1657-4959-4c92-8dca-084346924f0c

.part-0-0.inprogress.cf18c5d5-bfcb-41d3-8177-5d450a1a469e

1.8 的时候是这样的

part-0-0.pending

part-0-1.pending

part-0-2.pending

文件名是什么倒是不影响使用,但是多了个"."开头就比较麻烦,因“.”开头表示是隐藏文件,比如 hive 不读到,flink 自己读目录的时候,也会忽略 "." 开头的文件

注: 由于 flink 任务更在意时效性,没有开启 checkpoint,所以输出文件不能提交(也就是不会从 .part-0-0.inprogress.cf18c5d5-bfcb-41d3-8177-5d450a1a469e 修改为 part-0-0 )

先看下 StreamFileSink 怎么使用的,24小时滚动依次,每个文件最大 128M

DateTimeBucketAssigner dateTimeBucketAssigner = new DateTimeBucketAssigner("yyyy-MM-dd", ZoneId.of("Asia/Shanghai"));

StreamingFileSink sink = StreamingFileSink

.forRowFormat(new Path(path), new SimpleStringEncoder<String>("UTF-8"))

.withBucketAssigner(dateTimeBucketAssigner)

.withRollingPolicy(

DefaultRollingPolicy.builder()

.withRolloverInterval(TimeUnit.HOURS.toMillis(24))

.withInactivityInterval(TimeUnit.MINUTES.toMillis(5))

.withMaxPartSize(128 * 1024 * 1024)

.build())

.build();

stream

.addSink(sink)

调用了 StreamingFileSinkHelper.onElement 方法

public void invoke(IN value, Context context) throws Exception {

this.helper.onElement(value, context.currentProcessingTime(), context.timestamp(), context.currentWatermark());

又调用了 Buckets.onElement 方法

@VisibleForTesting

public Bucket<IN, BucketID> onElement(IN value, Context context) throws Exception {

return this.onElement(value, context.currentProcessingTime(), context.timestamp(), context.currentWatermark());

public Bucket<IN, BucketID> onElement(IN value, long currentProcessingTime, @Nullable Long elementTimestamp, long currentWatermark) throws Exception {

this.bucketerContext.update(elementTimestamp, currentWatermark, currentProcessingTime);

BucketID bucketId = this.bucketAssigner.getBucketId(value, this.bucketerContext);

Bucket<IN, BucketID> bucket = this.getOrCreateBucketForBucketId(bucketId);

bucket.write(value, currentProcessingTime);

this.maxPartCounter = Math.max(this.maxPartCounter, bucket.getPartCounter());

return bucket;

又调用了 Bucket.write 方法

void write(IN element, long currentTime) throws IOException {

if (this.inProgressPart == null || this.rollingPolicy.shouldRollOnEvent(this.inProgressPart, element)) {

if (LOG.isDebugEnabled()) {

LOG.debug("Subtask {} closing in-progress part file for bucket id={} due to element {}.", new Object[]{this.subtaskIndex, this.bucketId, element});

this.rollPartFile(currentTime);

this.inProgressPart.write(element, currentTime);

debug 可以看到,输出文件的生成是在 rollPartFile 方法中(简单理解,每次滚动文件的时候,需要创建新的文件来放新的数据)

查看 rollPartFile 源码

private void rollPartFile(long currentTime) throws IOException {

this.closePartFile();

Path partFilePath = this.assembleNewPartPath();

this.inProgressPart = this.bucketWriter.openNewInProgressFile(this.bucketId, partFilePath, currentTime);

if (LOG.isDebugEnabled()) {

LOG.debug("Subtask {} opening new part file \"{}\" for bucket id={}.", new Object[]{this.subtaskIndex, partFilePath.getName(), this.bucketId});

++this.partCounter;

自然就找到了 this.bucketWriter.openNewInProgressFile 方法

public InProgressFileWriter<IN, BucketID> openNewInProgressFile(BucketID bucketID, Path path, long creationTime) throws IOException {

return this.openNew(bucketID, this.recoverableWriter.open(path), path, creationTime);

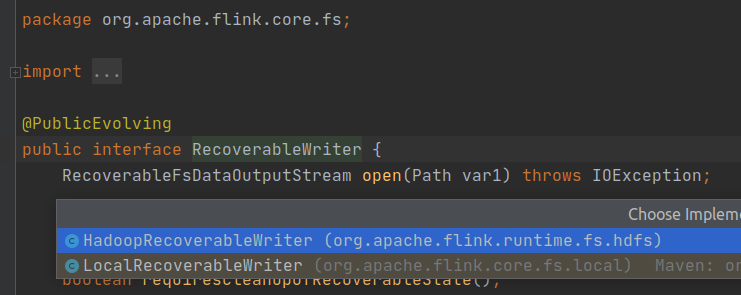

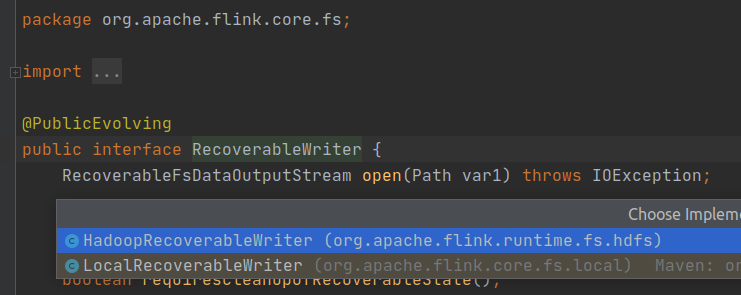

this.recoverableWriter.open(path) 是创建文件的地方, RecoverableWriter 有两种实现 LocalRecoverableWriter 和 HadoopRecoverableWriter(基于本地的debug,是 LocalRecoverableWriter, Writer 的选择是在创建输出 Buckets 的时候,基于输出文件的schame 选择的, hdfs:// 或 file://)

RecoverableWriter 的两种实现:

public RecoverableFsDataOutputStream open(Path filePath) throws IOException {

File targetFile = this.fs.pathToFile(filePath);

File tempFile = generateStagingTempFilePath(targetFile);

File parent = tempFile.getParentFile();

if (parent != null && !parent.mkdirs() && !parent.exists()) {

throw new IOException("Failed to create the parent directory: " + parent);

} else {

return new LocalRecoverableFsDataOutputStream(targetFile, tempFile);

static File generateStagingTempFilePath(File targetFile) {

Preconditions.checkArgument(!targetFile.isDirectory(), "targetFile must not be a directory");

File parent = targetFile.getParentFile();

String name = targetFile.getName();

Preconditions.checkArgument(parent != null, "targetFile must not be the root directory");

File candidate;

candidate = new File(parent, "." + name + ".inprogress." + UUID.randomUUID().toString());

} while(candidate.exists());

return candidate;

LocalRecoverableWriter 的 open 方法中创建了输出流,指定了目标文件和临时文件

临时文件在输出的过程中产生的文件,临时文件的名由方法: generateStagingTempFilePath 创建,可以看到文件是这样命名的: parent, "." + name + ".inprogress." + UUID.randomUUID().toString() ,所以看到的输出文件名是这样的

目标文件是输出最终的文件,flink 任务在做 checkpoint 的时候,会将临时文件 move(hadoop 是 rename)为目标文件,例如, LocalRecoverableWriter 的输出流 LocalRecoverableFsDataOutputStream.LocalCommitter 的提交方法:

public void commit() throws IOException {

File src = this.recoverable.tempFile();

File dest = this.recoverable.targetFile();

if (src.length() != this.recoverable.offset()) {

throw new IOException("Cannot clean commit: File has trailing junk data.");

} else {

try {

Files.move(src.toPath(), dest.toPath(), StandardCopyOption.ATOMIC_MOVE);

} catch (AtomicMoveNotSupportedException | UnsupportedOperationException var4) {

if (!src.renameTo(dest)) {

throw new IOException("Committing file failed, could not rename " + src + " -> " + dest);

} catch (FileAlreadyExistsException var5) {

throw new IOException("Committing file failed. Target file already exists: " + dest);

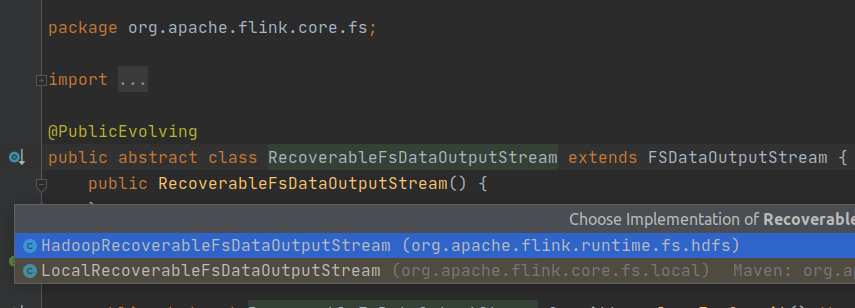

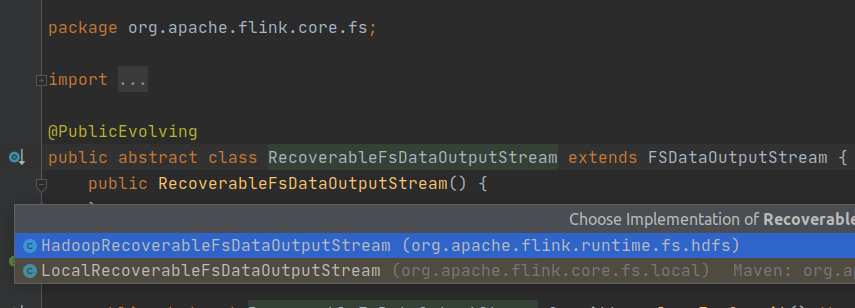

RecoverableFsDataOutputStream 的两种实现:

从源码看到临时文件的命名和临时文件提交的代码,那修改就再简单不过了

注: 这样修改不会改变 flink 任务本身的端到端一致性,但是下游会更早读到数据,一致性就会受到影响

欢迎关注Flink菜鸟公众号,会不定期更新Flink(开发技术)相关的推文